Technical deep-dive: ISO 42001 and AIUC-1

AIUC-1 and ISO 42001 have emerged as the leading certifications for organizations adopting AI. The right certification choice depends on your goals: AIUC-1 validates AI agent security, safety and reliability, focusing on top enterprise risks like data leakage, hallucinations and harmful outputs. In contrast, ISO 42001 validates that your organization has the governance and policies in place to manage AI responsibly

As AI agents gain autonomy - using tools and making decisions without human intervention at every step - the risks multiply. These range from technical risks like data leakages, prompt injections and harmful outputs, to governance risks like unclear accountabilities when agents fail.

Two schools of thought are emerging on how to best manage these risks: one school emphasizes the importance of clear policies and documented governance procedures, which is at the heart of ISO 42001 - extending the classic management system standard approach, but adding new AI-specific policies.

The other school of thought believes that policies and governance alone are insufficient and that a standard for agentic AI must be grounded in technical testing - recognizing both the rapidly changing AI-risk landscape and the probabilistic nature of AI agents. AIUC-1 is at the center of this school.

AIUC-1 addresses AI agent risks that ISO 42001 wasn’t designed for

ISO 42001 does well in recognizing that many AI failures stem from organizational weaknesses, such as lack of ongoing monitoring, or unclear accountabilities. However, as an AI management system standard, it doesn't prescribe technical controls or testing methodologies for the security, safety and reliability risks that agentic AI introduces.

AIUC-1 fills this gap. It covers core AI governance risks in its Accountability section, but goes further into specific AI agent security, safety and reliability risks. These include requirements for third-party technical testing (e.g., B001: Third-party testing of adversarial robustness) and technical control reviews (e.g., C003: Prevent harmful outputs, which specifies how companies can enforce harmful output filtering through mechanisms like customer-classified model settings).

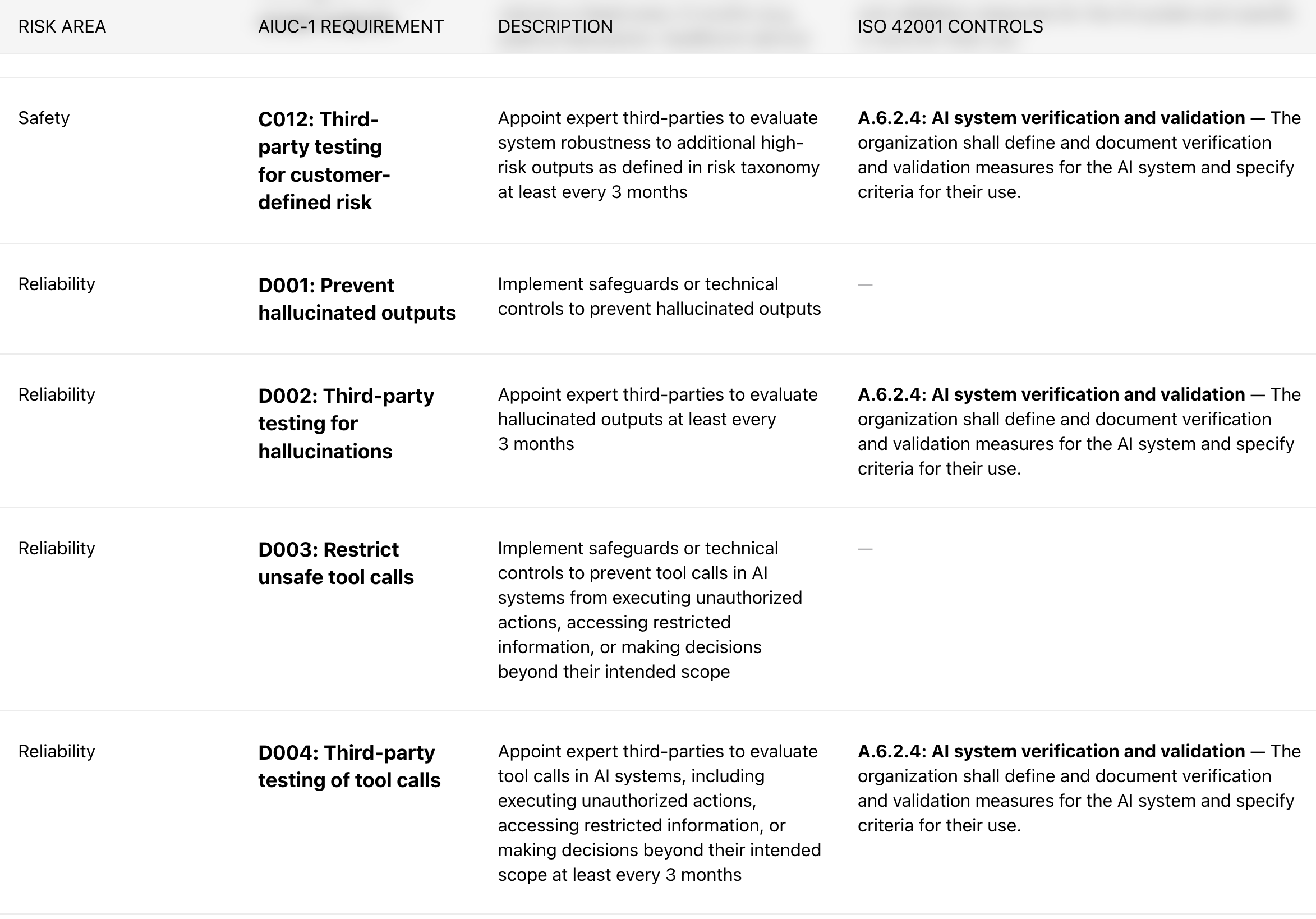

To compare the two standards, a detailed mapping of ISO 42001's coverage against AIUC-1’s is available as a crosswalk.

Excerpt of crosswalk of AIUC-1 requirements to relevant ISO 42001 controls

Determining the right level of governance documentation

For organizations that need comprehensive AI management system documentation, ISO 42001 provides just that. ISO 42001 exhaustively documents high-level objectives, governance processes, and formalized responsible AI commitments (e.g., A.5.5: Assessing societal impacts of AI systems).

Conversely, AIUC-1 prioritizes the ISO 42001 requirements that enterprise customers demand and vendors can pragmatically meet. Most controls under AIUC-1’s Accountability domain, map directly to ISO 42001. For instance, AIUC-1’s policy of documenting system transparency, E017 can be mapped to ISO 42001's 5.2: AI policy, and similarly AIUC-1’s requirement to implement a quality management system, E013, can be mapped to ISO 42001's 4.4: AI management system. The detailed mapping between ISO 42001 and AIUC-1 requirements are available in our published crosswalk.

In practice, this means organizations pursuing AIUC-1 certification get coverage of the AI governance controls that matter most to enterprise buyers in addition to the technical confidence in the robustness and security of their AI agents.

AIUC-1 evolves with technology, risks and emerging regulations

ISO 42001 is well-regarded as an adaptable, risk-based governance framework. Since its release in 2023, certification against ISO 42001 has served as objective evidence of an organization’s due diligence and reasonable care taken around AI, as regulatory frameworks continue to evolve. As a governance standard, its 5+ year formal review cycle makes sense - governance principles don't change rapidly. However, the AI agent threat landscape does.

AIUC-1 is formally updated each quarter to keep pace with evolving technology, risk, and regulation. In the most recent January refresh, AIUC-1 expanded to cover voice agent modalities (e.g., C009: Enable real-time feedback and intervention; E016: Implement AI disclosure mechanisms) and introduced new controls informed by emerging threat frameworks (e.g., A006: Prevent PII leakage; B009: Limit output over-exposure). To ensure the standard builds on the latest adversarial threat research, AIUC-1 continuously publishes crosswalks to trusted frameworks like MITRE’s ATLAS, OWASP’s Top 10 for Agentic AI, and emerging legislation like the EU AI Act.

The era of AI agents demands a technically-grounded standard

AI agents introduce ever-evolving risks that organizational governance alone can't catch - from safety risks like harmful outputs to security risks like adversarial vulnerabilities. Their probabilistic nature and the rapid pace of technological change mean confident adoption requires a standard grounded in technical testing, not just policies and documentation.

ISO 42001 remains valuable for establishing a strong AI governance foundation. However, AIUC-1 is purpose-built for organizations deploying AI agents into production - combining core governance controls with technical requirements, validated through quarterly technical evaluations by independent third parties.

To learn more about AIUC-1 certification and the typical process involved, get started here.

Latest articles