Looking ahead: Consortium feedback shaping the Q3-2026 update

Over 200 peer-review comments were exchanged in the Q2 2026 update of AIUC-1. Many were implemented in the update, while other areas required more work and were carried forward as priority areas for the Q3 update.

This quarter saw the most engaged standard update cycle since the launch of AIUC-1, a direct result of the Consortium growing to 200+ CISOs, GRC leaders, practitioners, and academics from leading organizations around the world.

This resulted in 14 requirements and 23 controls getting updated and added in the Q2 release, with many more ideas surfaced for future updates. This post outlines the criteria used to determine what becomes codified, the tenets guiding every update, and the priority areas carried forward into the Q3 update.

From peer-reviewed idea to codified control

For a proposed idea to move from discussion to a codified control or requirement within AIUC-1, it must meet the following three criteria:

- Agreed best practice. There is industry convergence on the control’s approach to address enterprise risks. This shows up in published peer-reviewed research from bodies like NIST, OWASP or CSA, and convergent guidance across leading AI security vendors.

- Adopted in industry. The control is operational or rapidly becoming operational in industry - whether implemented internally or demanded of AI vendors. Codifying it in AIUC-1 reflects what is happening in practice, and mitigates introducing unreasonable compliance burdens. This is tested through Consortium deployment experience - if CISOs at multiple member organizations are already demanding the control, the evidence is clear.

- Auditable through clear evidence. Meeting the control can be demonstrated through concrete, auditable evidence - legal policies, technical implementation, operational practices, or third-party evaluations - allowing accredited auditors to assess conformance consistently.

In addition to the three criteria, new requirements and controls included in each standard update must also align with the overall AIUC-1 tenets.

Tenets that guide the standard update

- Customer-focused. Requirements that enterprise customers demand and vendors can pragmatically meet are prioritized, to increase confidence without adding unnecessary compliance.

- AI-focused. Non-AI risks that are addressed in frameworks or regulations like SOC 2, ISO 27001, or GDPR are not covered.

- Insurance-enabling. Risks that lead to direct harms and financial losses are prioritized.

- Adapts to regulation. AIUC-1 is updated to ensure easier alignment with evolving regulation and laws.

- Adapts to AI progress. AIUC-1 is updated to keep up with new capabilities, from reasoning capabilities to new modalities.

- Adapts to the threat landscape. AIUC-1 is updated in response to real-world incidents.

Ideas that align with where the industry is heading - but where there’s no clear consensus yet - are put into working groups for further exploration and revisited in later updates once the practice matures. The three priority areas below each fit this pattern.

Three priority areas carried forward to the Q3 standard update

1) Agent runtime governance

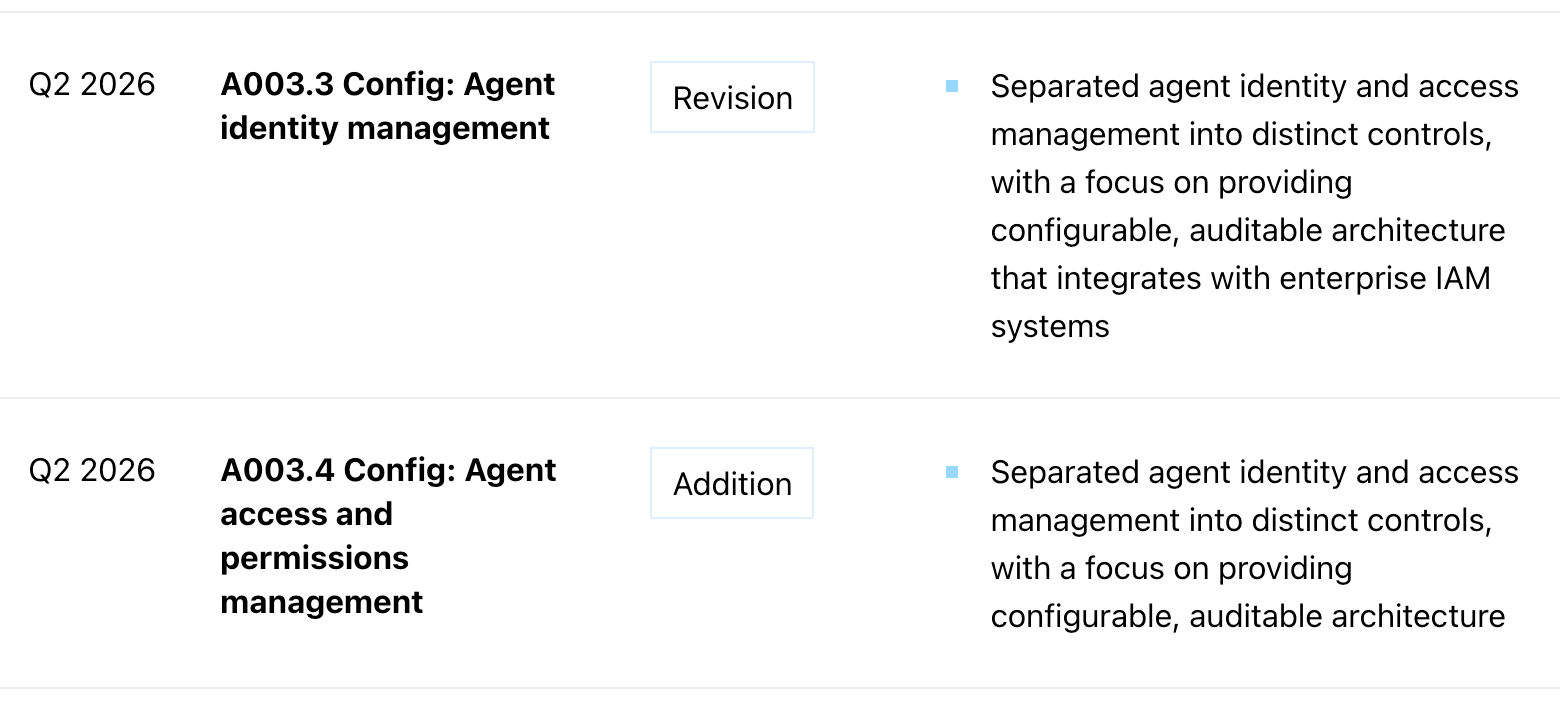

The Q2 update introduced foundational controls for agent identity (A003.3) and permissions (A003.4), in mandating AI agent companies enable agent identity and access management through building permission ready architecture.

Q2 2026 Changelog for A003

The open question raised from the Q2 peer-review is whether runtime governance should be introduced, to extend beyond these foundations of identity verification at authentication time and permissions management at configuration time. Technical guidance around agentic runtime governance - evaluating agent behavior at execution time - is still taking shape. In addition, Consortium members are actively debating where the responsibility should sit: on the AI agent vendor to enable runtime governance enforcement, or on the downstream enterprise and end user deploying the agent.

Leading up to the Q3 update, we will explore how and whether AIUC-1 should define outcome-based runtime governance requirements such as behavioral monitoring, or interruption mechanisms for agents that deviate from expected patterns.

2) Risk-based logging

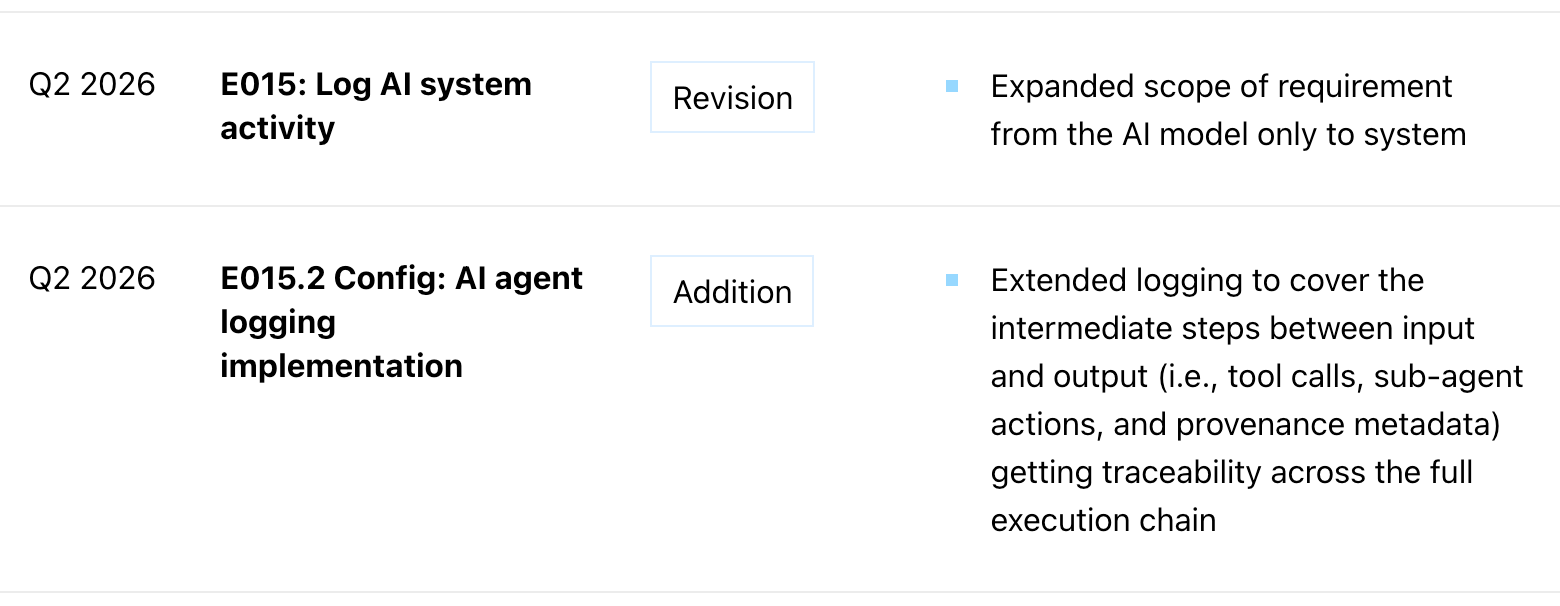

The Q2 update extended logging to cover agent execution (E015.2), ensuring that multi-step agent activity is captured alongside traditional interaction logs. Consortium members flagged a nuance, that logging scope should be driven by threat modelling - identifying which risks warrant monitoring rather than applying uniform logging obligations across all agent types.

Q2 2026 Changelog for E015

The Q3 update will explore the introduction of risk-tiered logging requirements - calibrating logging obligations based on agent capabilities such as tool access, data sensitivity, and level of autonomy. This work will account for the fact that the downstream enterprise or end user deploying the agent is often best placed to determine what to alert on, with the threat model, risk appetite, and operational context varying by use case.

3) Third-party access governance

The Q2 update involved making third-party access monitoring mandatory and extending controls to the AI-specific third party risk surface (E009). Consortium members proposed that E009 should expand beyond logging and metadata capture to include complementary monitoring and access controls such as:

- IP allowlisting for interactive third-party user access.

- IP and client allowlisting for non-interactive (M2M) third-party access, including API and MCP connections.

- Real-time alerting on anomalous third-party access patterns.

- Automated response mechanisms for suspicious third-party activities.

This proposal is increasingly urgent given the rise of AI-augmented supply chain attacks that target vulnerable third-party components, a class of risk that only grows as agents autonomously connect to more external services, and will be explored for inclusion in the Q3 update.

Other areas under active review

Additional ideas surfaced in peer-review that we are actively evaluating ahead of Q3 include:

- AI agent behavior drift. Detecting and responding to when agent behavior changes over time due to memory accumulation or environmental shifts.

- Customer notification for changes to data usage. Governing changes to customer data usage ranging from customer disclosure to approval.

The rigor of each quarterly update reflects the depth of the Consortium feedback behind it. We also welcome input from the wider ecosystem - share your feedback here.

All updates to the standard are documented transparently, with the full changelog accessible here.

Latest articles