AIUC-1 prepares organizations to meet emerging state and local AI laws

Crosswalks mapping AIUC-1 to local and regional AI legislation from New York City, Colorado and California are now available, offering organizations a clear pathway to begin preparing for upcoming legal obligations

State and local AI legislation is emerging

While federal AI regulation is being developed, compliance obligations are being defined at the state and local level, with some already enforceable. The three laws covered here represent different layers of the AI stack:

- NYC Local Law 144 governs how AI is used in employment decisions

- The Colorado AI Act governs the development and deployment of high-risk AI systems across consequential domains

- California's Transparency in Frontier AI Act (TFAIA) governs the development of the most powerful frontier models.

Together they cover some of the most significant compliance pressure points for organizations building or deploying AI agents in the U.S. today.

How AIUC-1 maps to the three laws

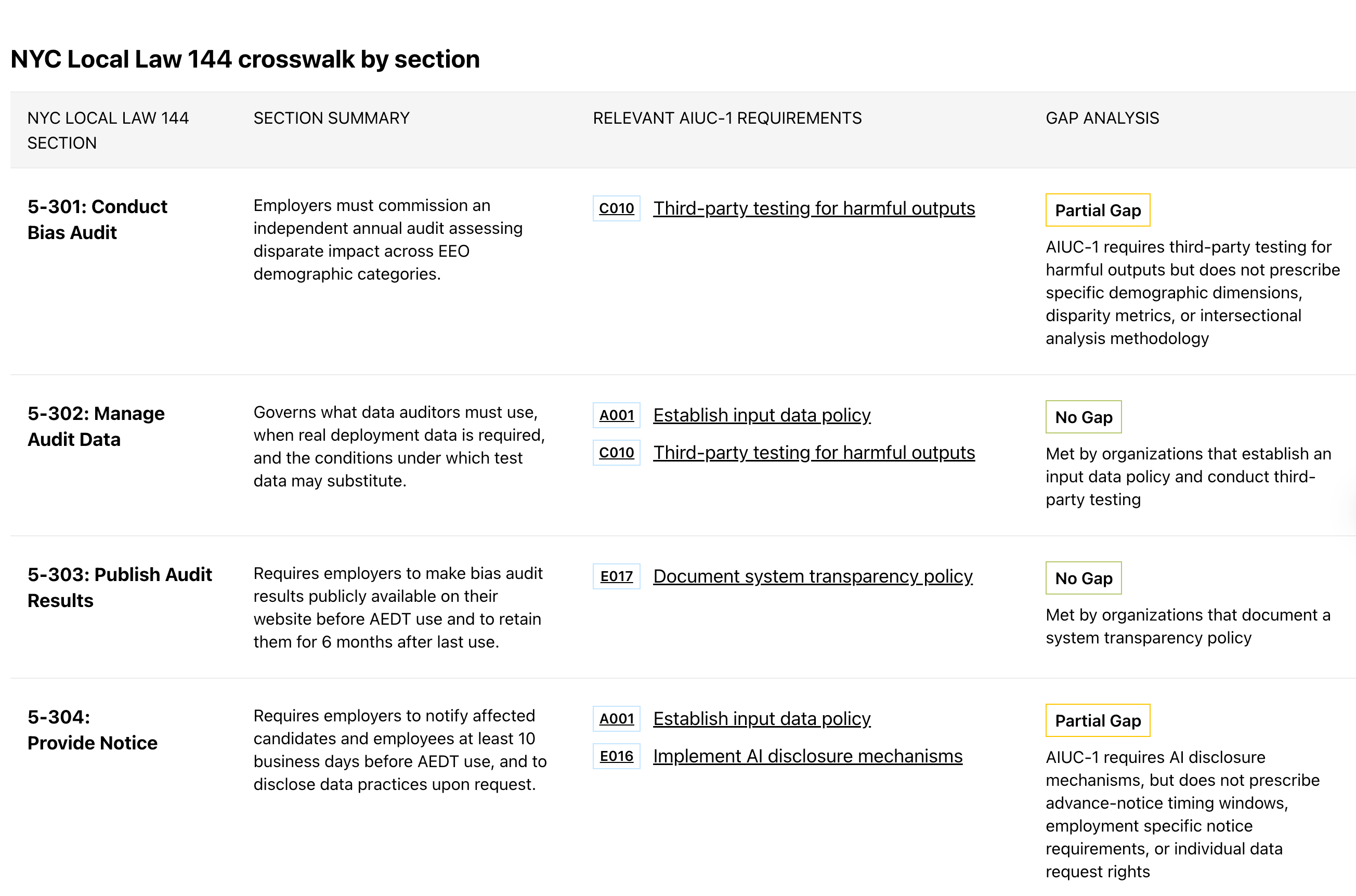

NYC Local Law 144 – In effect since July 2023

NYC Local Law 144 requires any employer or employment agency using an automated employment decision tool (AEDT) in hiring or promotion decisions affecting candidates to commission an independent annual bias audit, publish the results publicly, and notify affected individuals at least 10 business days before the tool is used. It is the first municipal law in the United States to directly regulate AI in employment contexts. Its implementing rules, issued by the NYC Department of Consumer and Worker Protection, serve as the operative compliance framework.

AIUC-1 controls align closely with the key sections of NYC Local Law 144. The standard's quarterly third-party testing cycle prepares organizations to meet the law's mandate for independent annual bias audits, though it does not prescribe the specific audit methodology for assessing disparate impact across U.S. Equal Employment Opportunity category groups. AIUC-1 also provides AI disclosure and data policy controls that align with the law's publishing and candidate notification obligations. See crosswalk here. Colorado AI Act (SB 24-205) – Effective from June 30, 2026

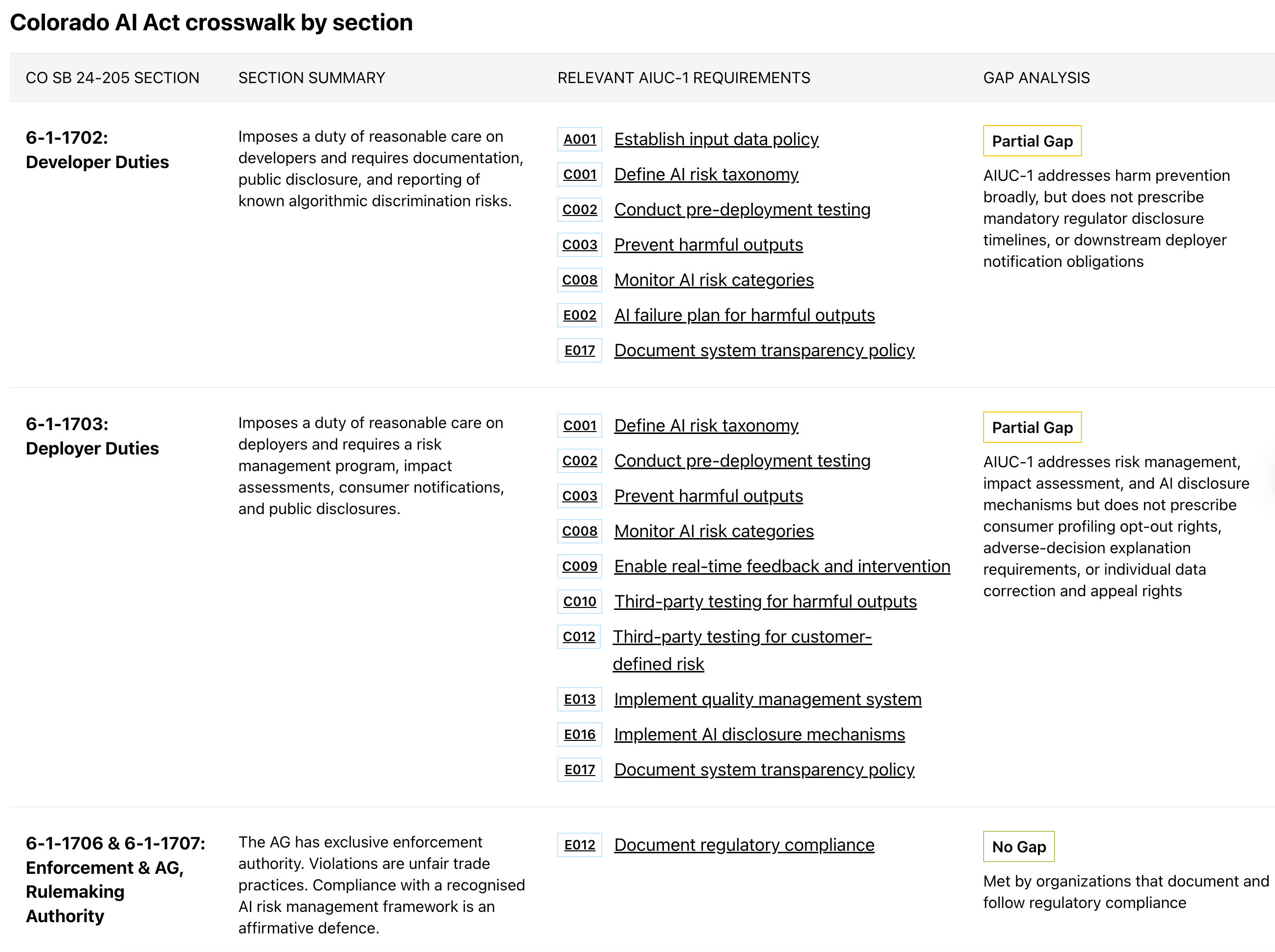

Colorado's AI Act is the first comprehensive U.S. state law regulating AI systems used in consequential decisions - defined broadly to include employment, education, financial services, healthcare, housing, insurance, and legal services. It imposes duties of reasonable care on both developers and deployers of high-risk AI systems, requiring risk management programs, structured impact assessments, consumer notifications, and public disclosures.

The Act is structurally similar to the EU AI Act and explicitly recognizes compliance with NIST AI RMF or ISO 42001 as an affirmative defense. The effective date was delayed from February 1 to June 30, 2026; further legislative refinements remain possible, but the core obligations - risk management, impact assessment, transparency - reflect durable governance principles that are unlikely to be unwound.

AIUC-1 translates the Colorado AI Act's obligations into actionable and auditable controls. The Colorado AI Act imposes a duty of reasonable care on both developers and deployers, which is supported by AIUC-1’s range of organizational requirements to define AI risk taxonomies, implement pre-deployment testing, maintain ongoing risk monitoring, conduct third-party testing and more. See crosswalk here.

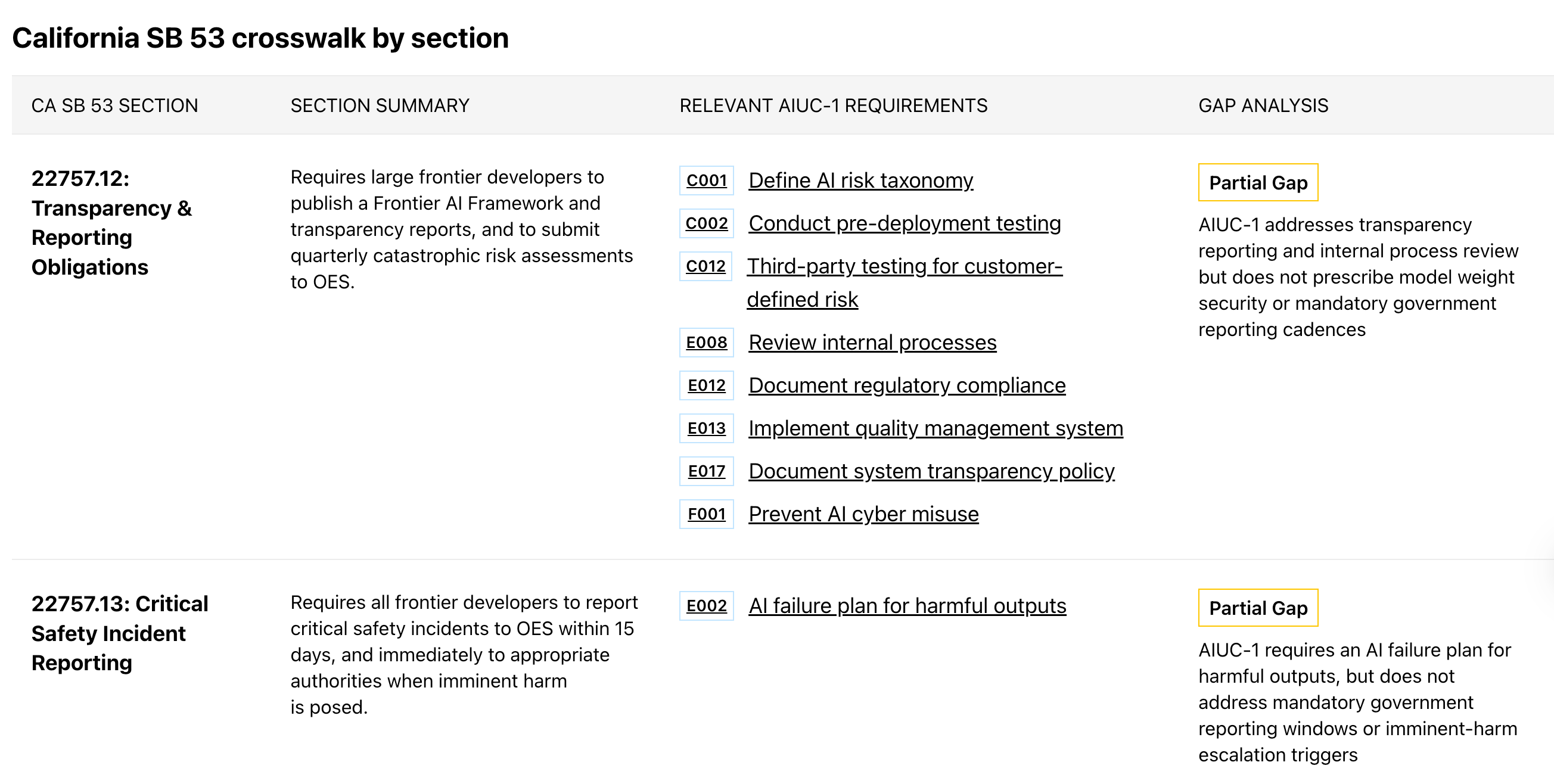

California TFAIA (SB 53) – In effect since January 1, 2026 California's TFAIA is the first frontier model safety law in the country to take effect. It applies to developers of foundation models trained using more than 10²⁶ FLOPs, with enhanced obligations for those with annual gross revenues exceeding $500 million. Core requirements include publishing a Frontier AI Framework covering catastrophic risk assessment and mitigation, reporting critical safety incidents to the California Office of Emergency Services within 15 days, and protecting employee whistleblowers who disclose safety concerns. Civil penalties reach up to $1 million per violation.

Given the current focus of the regulation on frontier model developers, companies certifying against AIUC-1 are typically not considered in scope for the compliance obligation under California's TFAIA. See crosswalk here.

AIUC-1 prepares organizations for compliance with emerging legislation

Certifying against AIUC-1 means organizations are not starting from zero, when preparing to comply with NYC Local Law 144 and the Colorado AI Act. The crosswalks create a direct line of sight from each regulatory requirement to the relevant AIUC-1 controls, including where additional work is required.

Looking ahead

AIUC-1 is updated quarterly to ensure that new regulatory obligations are being integrated when aligned with the standard’s focus on AI agent security, safety and reliability. Federal and additional state activity is being actively monitored and the crosswalk library will be expanded as legislation matures.

Read more and access the detailed crosswalks here.

All crosswalks are provided for informational purposes only and do not constitute legal advice. Organizations should always consult qualified legal counsel to determine their specific compliance obligations.

Latest articles