OWASP AI Vulnerability Scoring System integrates AIUC-1

The partnership enables organizations to quickly identify risks associated with AI agent deployments, quantify those risks, and prioritize mitigations with AIUC-1

With agentic AI dominating headlines – from OpenClaw and Moltbook to Meta’s acquisition of Manus – enterprises are racing to deploy autonomous agents as digital workers. But the properties that make agents valuable – such as autonomy, persistence, and broad tool access – are exactly what make vulnerabilities in them so much harder to contain.

The same SQL injection vulnerability creates radically different risk profiles depending on deployment context. In a read-only reporting agent, it's a data leak. In an autonomous trading agent with broad tool access and persistent memory, it becomes a systemic threat capable of cascading failures across connected systems.

Today, organizations have no standardized way to quantify these differences. Without scoring mechanisms that account for agent-specific factors amplifying the risk surface - such as autonomy, tool access, and memory persistence - security teams struggle to prioritize mitigations or communicate risk severity to stakeholders.

Introducing the OWASP AI Vulnerability Scoring System

The OWASP AI Vulnerability Scoring System (AIVSS) was developed specifically to solve this problem. It provides a comprehensive risk scoring methodology that extends traditional vulnerability assessment (CVSS) by quantifying how agentic capabilities amplify security risks.

AIVSS produces standardized numerical scores (0-10) that combine technical vulnerability severity with agent-specific characteristics. This enables organizations to quickly identify top risks and prioritize resources to mitigate those - while also serving as a concrete, exec-level risk reporting tool.

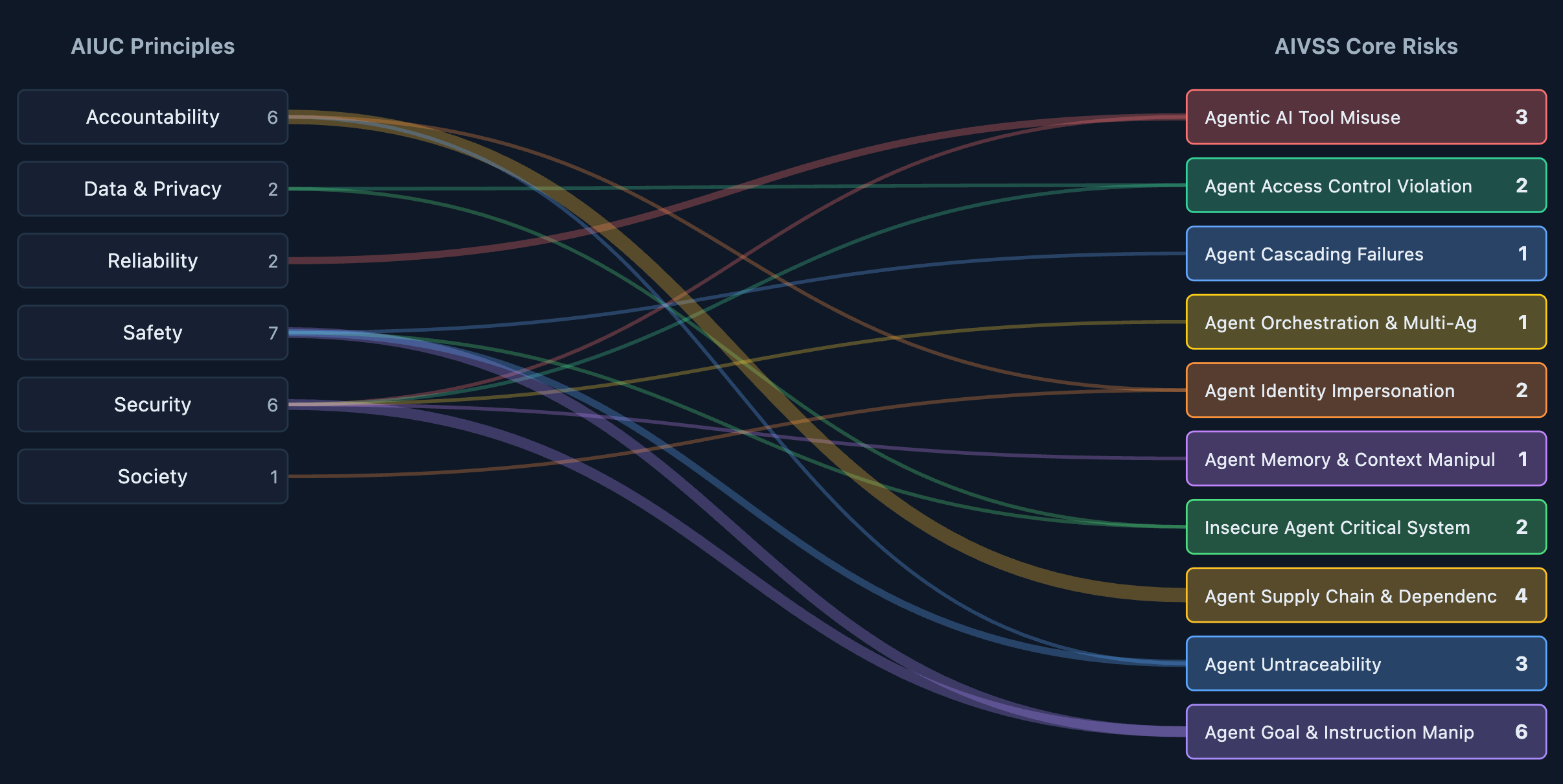

AIVSS scoring is built on ten core security risks unique to autonomous agents:

- Agentic AI Tool Misuse - Compromised tool selection, insecure invocation, or lack of oversight

- Agent Access Control Violation - Permission escalation, credential mismanagement, or role inheritance exploitation

- Agent Cascading Failures - Cross-system exploitation where one compromised agent propagates damage

- Agent Orchestration and Multi-Agent Exploitation - Attacks targeting coordination mechanisms between agents

- Agent Identity Impersonation - Spoofing of agent or human identities through deepfakes or credential theft

- Agent Memory and Context Manipulation - Poisoning persistent memory or exploiting context drift

- Insecure Agent Critical Systems Interaction - Unauthorized manipulation of infrastructure, IoT, or operational technology

- Agent Supply Chain and Dependency Risk - Compromised models, libraries, or third-party tools

- Agent Untraceability - Inability to audit agent decision chains or attribute actions

- Agent Goal and Instruction Manipulation - Prompt injection and semantic hijacking of agent objectives

Highlighting amplification factors for AI agent risk

AIVSS recognizes that agentic capabilities don't just add new risks - they act as force multipliers that expand the blast radius of existing vulnerabilities.

The framework quantifies ten amplification factors on a standardized scale:

- Execution Autonomy

- External Tool Control Surface

- Natural Language Interface

- Contextual Awareness

- Behavioral Non-Determinism

- Opacity & Reflexivity

- Persistent State Retention

- Dynamic Identity

- Multi-Agent Interactions

- Self-Modification

Each factor receives a score (0.0, 0.5, or 1.0) based on the agent's actual implementation. These scores feed into a mathematical model that calculates the Agentic AI Risk Score (AARS), which combines with the technical CVSS base score to produce the final AIVSS rating.

This transforms agent security from qualitative descriptions into quantifiable metrics that CISOs can defend to the board and integrate into existing risk management frameworks.

Integrating AIUC-1 to proactively mitigate risks

AIUC-1 - the standard for AI agent safety, security, and reliability - offers a comprehensive list of controls, enabling security leaders to quickly move from risk identification to risk mitigation. Because both AIUC-1 and the AIVSS are focused on AI agents specifically, the risks map directly to controls and doesn’t require an additional layer of translation.

The power of AIUC-1 lies in its specificity. Rather than broad control objectives like "implement access controls," AIUC-1 requires organizations to demonstrate concrete practices: technical controls to prevent tool calls in AI systems from executing unauthorized actions, groundedness filtering to mitigate hallucinations, monitoring & logging to ensure agent actions are traceable.

The complete mapping between AIVSS and AIUC-1 creates a direct workflow from risk identification to control implementation. When AIVSS scoring reveals high-severity risks in specific categories - for example, Agent Access Control Violation (AIVSS score 9.7) - the mapping points security teams to the precise AIUC-1 requirements that mitigate those risks.

A new approach for proactive AI agent risk management

With this partnership, organizations can now follow a structured workflow:

Step 1: Quantify risk using AIVSS. Score agent deployments against the ten core risks, incorporating system-specific amplification factors to generate numerical severity ratings.

Step 2: Identify relevant AIUC-1 requirements. Use the mapping to translate high-severity AIVSS findings into specific AIUC-1 requirements that address root causes.

Step 3: Obtain AIUC-1 certification. Pursue full AIUC-1 certification including required technical testing, creating third-party validation that controls have been implemented and technical test results demonstrating that controls work when agents are deployed in the real world

This workflow transforms AI agent security from reactive incident response into proactive risk management. Security teams gain the language and metrics to justify budget allocation. Auditors gain clear evaluation criteria. Executives gain confidence that agent deployments meet institutional risk tolerances.

The AIVSS-AIUC-1 integration is available now via the AIVSS website and as part of AIUC-1 Crosswalks.

About AIUC-1: The standard for AI agent safety, security, and reliability. Built with Technical Contributors from trusted institutions including MIT, Stanford, Google Cloud, Cisco, Orrick, and the Cloud Security Alliance. Read more at aiuc-1.com.

About OWASP AIVSS: The OWASP AI Vulnerability Scoring System (AIVSS) is a standardized framework for assessing and quantifying security risks in AI systems, with a specific focus on agentic AI architectures. Read more at aivss.owasp.org

Latest articles